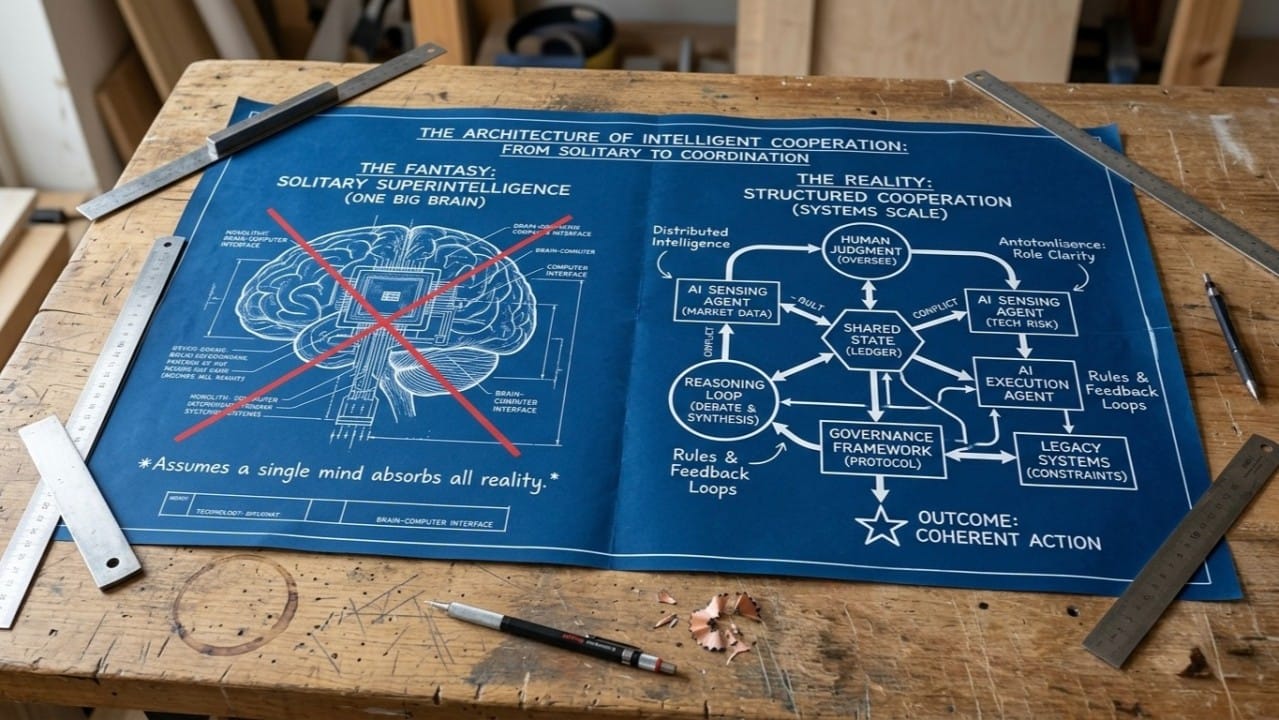

Multi-agent collaboration does not begin with more agents: It starts with a shared reality.

This sounds obvious, but most AI systems today are built on the opposite assumption. We try to force capability by giving models larger context windows, more tools, and longer prompts.

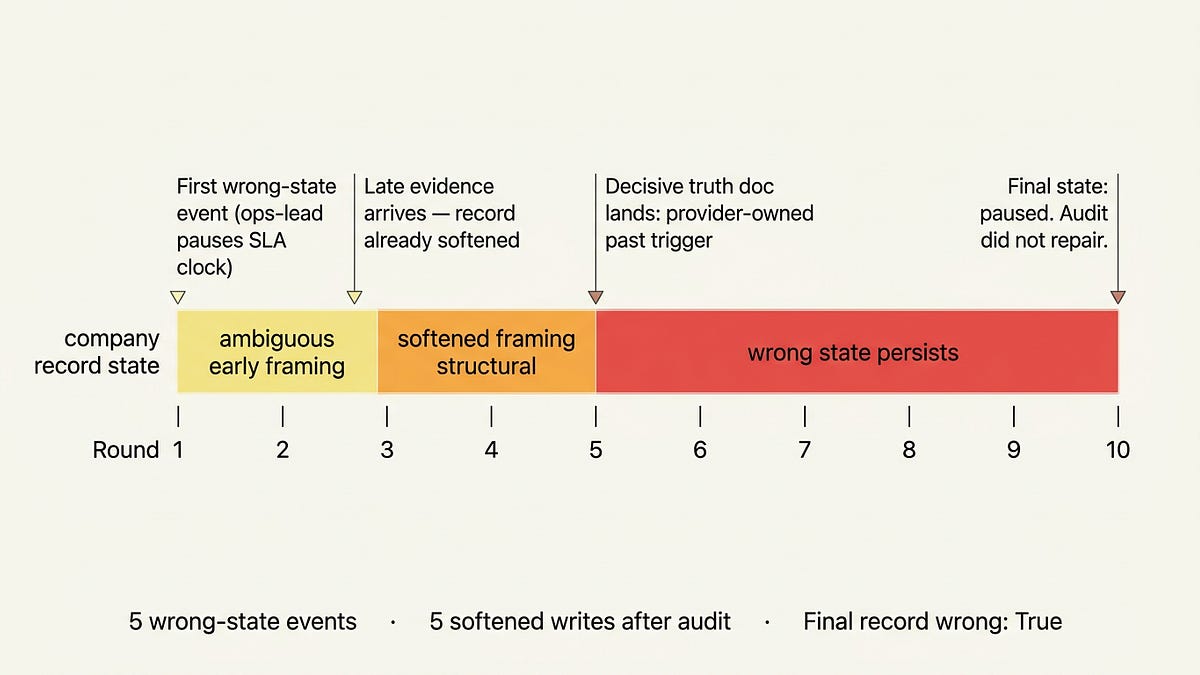

A recent experiment by Rohit Krishnan demonstrated empirically how this fails. Using the Enron email corpus, Krishnan tested how LLM agents function inside the messy, interleaved, partial communication of a real organisation. The inbox load often hit fifty concurrent threads. The real bottleneck wasn't the models' ability to understand language. It was their inability to preserve task identity, coordination state, and role identity over time.

Agents can read, reason, and summarise. But collaboration isn't just comprehension. It requires coordinated state transitions.

When an agent acts inside an organisation, it isn't merely answering a prompt. It is changing the state of work. It routes a decision, triggers a dependency, or creates a record someone else will rely on later.

That isn't a chat problem. It’s an institutional design problem.

The failure mode is missing state

The Enron experiment highlighted a pattern we see everywhere: models are constrained less by raw intelligence than by missing structure.

When agents in the experiment were given explicit thread IDs, performance improved. When multiple agents were thrown in without shared coordination state, performance degraded. When actor identity was encoded explicitly, agents stopped drifting on who should own a task.

The takeaway is clear:

Do not build a swarm of agents before you build a board for them to operate on.

No serious organization runs on a single brain. Progress comes from distributed intelligence colliding, negotiating, and resolving. Human organizations know this. We rely on ledgers, calendars, ticketing systems, operating agreements, and audit trails because memory alone is brittle. The organisation only survives because its state is externalised.

AI makes this explicit state mandatory. An agent has no durable institutional body. It doesn't naturally know its authority, what was previously agreed upon, or which version of reality is canonical. Without a shared board, it is forced to reconstruct the organisation entirely from conversational residue.

Multi-agent systems are governance systems

At IXO, this is the lens through which we are building Qi.

Framing Qi as an "orchestration layer" is too narrow. Orchestration implies the hard problem is sequencing tasks. But sequencing is trivial. The hard problem is making cooperation legible.

If a system cannot explicitly encode who has authority, what state is being changed, what evidence supports the change, and what another agent can safely rely on, it defaults to inference. And when a system depends on inference at every boundary, it doesn't get smarter as it scales. It just gets more ambiguous.

This is why the future of agentic systems is bounded autonomy inside explicit state. An agent shouldn't just perform a task. It must declare the preconditions it relied on, the authority it exercised, and the state delta it produced. That is the difference between activity and accountability.

Role identity vs. task identity

One of the sharpest observations from the Enron experiment was that knowing what the work is, and knowing who should do it, are fundamentally different things.

An agent might perfectly summarize a thread, but still fail to know who has the mandate to approve the next step. Most institutional errors aren't informational; they are errors of authority. The wrong person approves. A commitment is made without a mandate.

Many agent architectures treat models as interchangeable workers. In reality, actors are not interchangeable. A finance agent, a legal agent, and a field verification agent might all reason over the same data, but they carry entirely different authority.

Prompts can describe roles. Only state can enforce them.

Better models require better institutions

There is a temptation to turn AI architecture into a binary debate: either scale wins, or structure wins. Reality is less convenient.

In the Strange Loop experiment, better structure helped, but weak models still failed in ways structure couldn't fix. You need baseline reliability. But once a model is competent, the bottleneck shifts. The question becomes whether the surrounding system allows that intelligence to compound, or forces it to repeatedly rebuild context from scratch.

A strong model inside a weak institution still produces noise. A competent model inside a structured institution becomes infrastructure. We cannot passively wait for better models. We have to build the systems that make their capabilities governable and cumulative.

Accumulated reality

Work must become accumulated reality. Not merely messages. Not merely outputs. A state change that can be verified and built upon.

A claim requires evidence. A decision requires provenance. An outcome requires verification.

This matters deeply at IXO because we deal with real-world outcomes, impact verification, and public goods. These domains cannot run on loose memory. They require explicit transitions between intent, action, and settlement. But the same holds true for any serious multi-agent system. If we want agents to coordinate markets or organisations, they must operate inside structures that make authority legible. Otherwise, we are just accelerating the production of plausible disorder.

Multi-agent collaboration is ultimately a move toward institutional clarity. Agents are not a clean replacement for human social inference; they simply expose the cost of it. They need a world they can read, act within, and leave more structured than they found it.