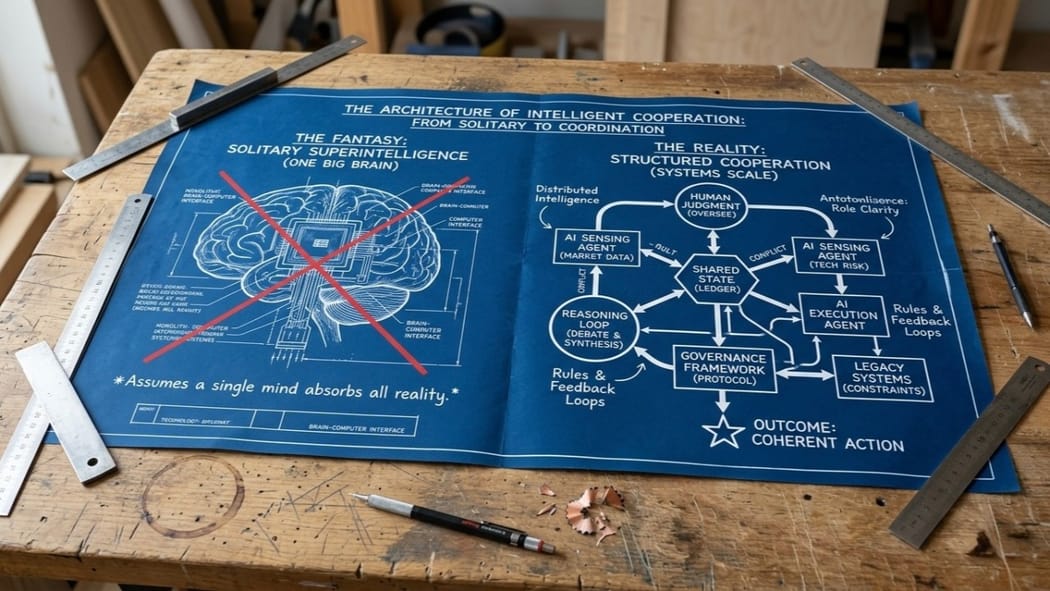

The dominant AI narrative about the path to AGI, super-intelligence, and the singularity story all seem to assume that intelligence scales primarily by making unified, global models larger and smarter.

This is a compelling sci-fi trope, but it ignores how intelligence actually works in the real world.

The Paradigms of Intelligence (Pi) research team at Google recently published Agentic AI and the Next Intelligence Explosion, in which the authors highlight the flaw in this singularity thinking. They argue that intelligence doesn't scale in isolation. It scales through interaction, role differentiation, conflict, and institutional structure. What matters isn't one giant mind, but the quality of cooperation among many minds, both human and non-human.

"By its nature, intelligence is high-dimensional and relational, not a single quantity that must be unambiguously less or greater than human scale. In fact, it is unclear what we even mean by “human scale,” given that our intelligence is already a collective property, not an individual one".

This lands because it matches reality. Anyone who has built complex systems knows that no serious organisation runs on one brain. Not a company, not a government, not a distributed engineering team.

Intelligence in practice is always distributed.

Someone sees the technical risk. Someone else sees the market timing. A third person sees the political landmine nobody wanted to name. Progress doesn’t come from a single viewpoint absorbing all of reality; it comes from how those partial perspectives collide, negotiate, and resolve.

If intelligence is inherently social, the engineering problem changes fundamentally. You stop asking, “How do we build the smartest agent?” and start asking, “How do we structure cooperation so that intelligence compounds instead of collapsing?”

The first question leads you toward model size, benchmarks, and compute. It is useful, but incomplete. The second question forces you to confront coordination costs, misaligned incentives, procedural trust, and the messy reality that capability without governance usually just scales your failure modes.

This connects AI to a much older infrastructure. We already have technologies for collective intelligence: language, law, ledgers, markets, and bureaucracy. A ledger is just social memory. A court is structured disagreement. A market is distributed sensing coupled with price formation.

None of these systems work because every participant is perfectly rational or sees the whole picture. They work because rules, boundaries, and feedback loops allow partial, flawed views to combine into something more coherent than the people inside them.

The problem is that our current coordination systems are breaking down.

Teams drown in Slack updates but lack shared state. Institutions accumulate procedure but lose their original purpose. Audits become performative. Nobody trusts the metrics, so everyone reverts to politics, status, or guesswork. Then we act surprised when smart people produce dumb outcomes together.

Throwing AI into a broken bureaucracy doesn’t fix it by default. Often, it accelerates the dysfunction. A badly governed multi-agent system will simply automate bias, accelerate noise, and create the illusion of consensus where none exists.

This is why we need to rethink "alignment." We have spent years treating alignment as a property of an individual model—trying to tune its weights so it behaves safely. But large-scale social systems are rarely made safe by the inherent virtue of their components. They are made safer by architecture: separation of powers, clear boundaries, accountability, and the ability to audit and override.

If AI is going to participate in hiring, health systems, public administration, or financial markets, the question is no longer just whether a model can generate a good answer. The question is whether the surrounding system can handle disagreement, trace authority, and stop bad decisions before they propagate.

This is where intelligent cooperation stops being a theory and starts reshaping society. Not at the level of chat interfaces, but at the level of social operating systems.

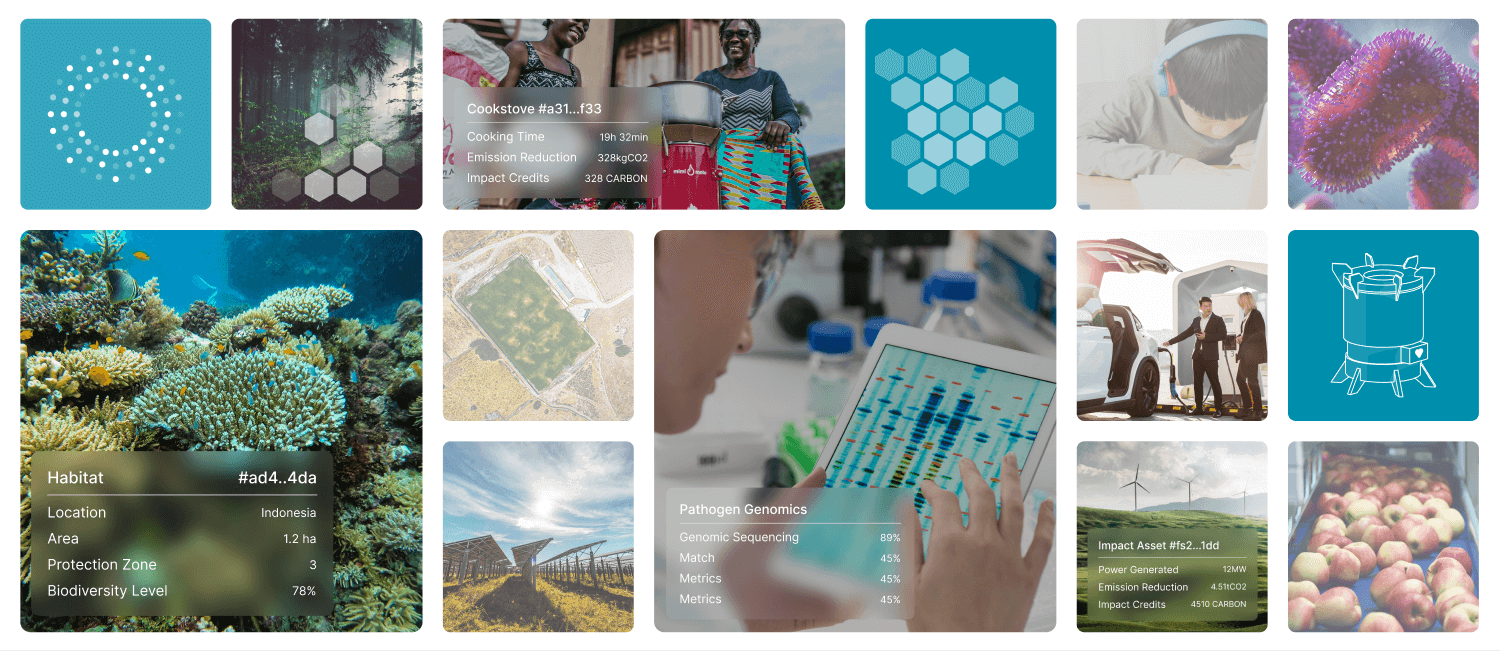

We are talking about environments where humans and agents work over shared state. Where actions are tied to explicit intent. Where delegation is strictly bounded, decisions can be contested, and the record of what happened isn't reconstructed after the fact through meetings and blame, but is produced as a natural byproduct of the work itself. These are the foundational principles on which we have been building our Qi Intelligent Cooperating System.

Qi lets organisations program how intelligence flows through their work.

That is a much deeper shift than simply automating tasks. It means we can start redesigning coordination itself, reducing the massive overhead of structured cooperation without destroying accountability.

There are obvious trade-offs. More agents do not automatically yield more wisdom. Role proliferation can create theatre instead of substance. Internal debate between models might improve reasoning on a math problem, but it doesn't automatically confer legitimacy in public systems.

We need to be honest about the gap between solving for answer quality and solving for social legitimacy. A society is not just a problem-solving machine. People need to know not only that a decision is probably right, but who had the authority to make it, what values shaped it, and what recourse exists when it goes wrong.

The future will not be determined by who builds the smartest solitary model. It will be determined by the quality of the cooperation systems we build around minds that are now multiple, fast, cheap, and active.

The fantasy was replacement. Machine over human. The reality will be entanglement—layered systems of cooperation, conflict, verification, and delegation.

The real work now is not to worship intelligence, but to design the terms under which it cooperates. We need to be asking questions such as:

- What kinds of institutional scaffolding do we need so AI strengthens human judgment instead of bypassing it?

- Where in our systems do we need more autonomy, and where do we deliberately need to introduce friction?

- When agents start thinking together, who is ultimately accountable for what they decide?