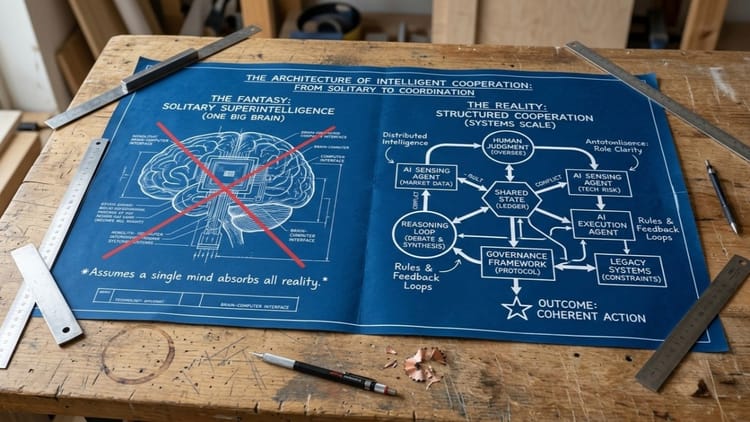

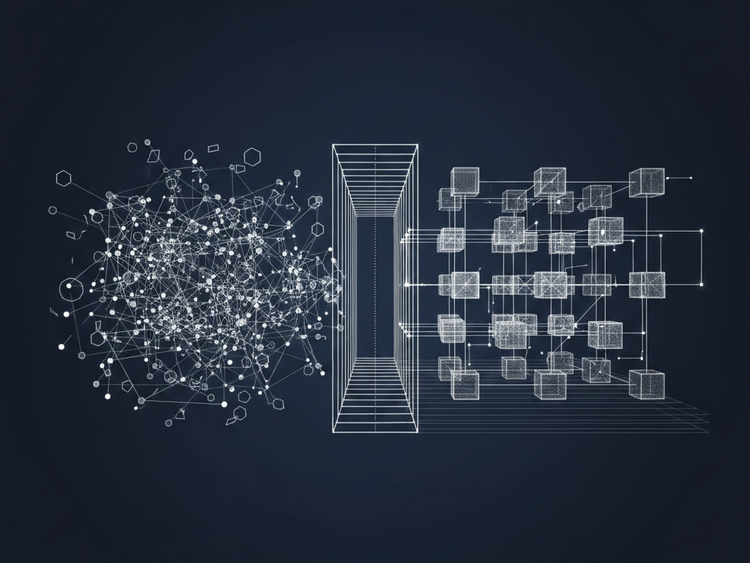

How intelligence actually works in the real world

No serious organisation runs on one brain. Progress comes from distributed intelligence—different, partial perspectives colliding, negotiating, and resolving. A recent paper from the Paradigms of Intelligence (Pi) research team at Google articulates this really well.